Having taken a general engineering course my senior year of High school at the same time I was researching Neural Networks (read more about Neural Networks here), I decided it was time to make a physical agent – a tangible robot controlled by a Neural Network. My background in robotics helped in this venture as well, as I knew before hand how servos and ultrasonic sensors worked.

Before getting to the hard part of writing the code to control the robot, I had to make the agent itself first. I knew I wanted to make a robot that learned how to move, but I didn’t have 2 motors. Instead, I decided to use servos, as it not only allows movement, but the motion itself is complex and warrants a fair amount of learning in order to master it. For parts, I used an Arduino Uno with 2 Parallax standard servos and 1 HC-SR04 Ultrasonic sensor. A friend of mine, Clay Busbey, helped out by 3D printing parts for me. I had 3 parts printed, the main chassis and 2 essentially straight bars used as 1 arm with a joint. I put the Arduino Uno and a half+ breadboard onto the chassis and hooked everything up from there.

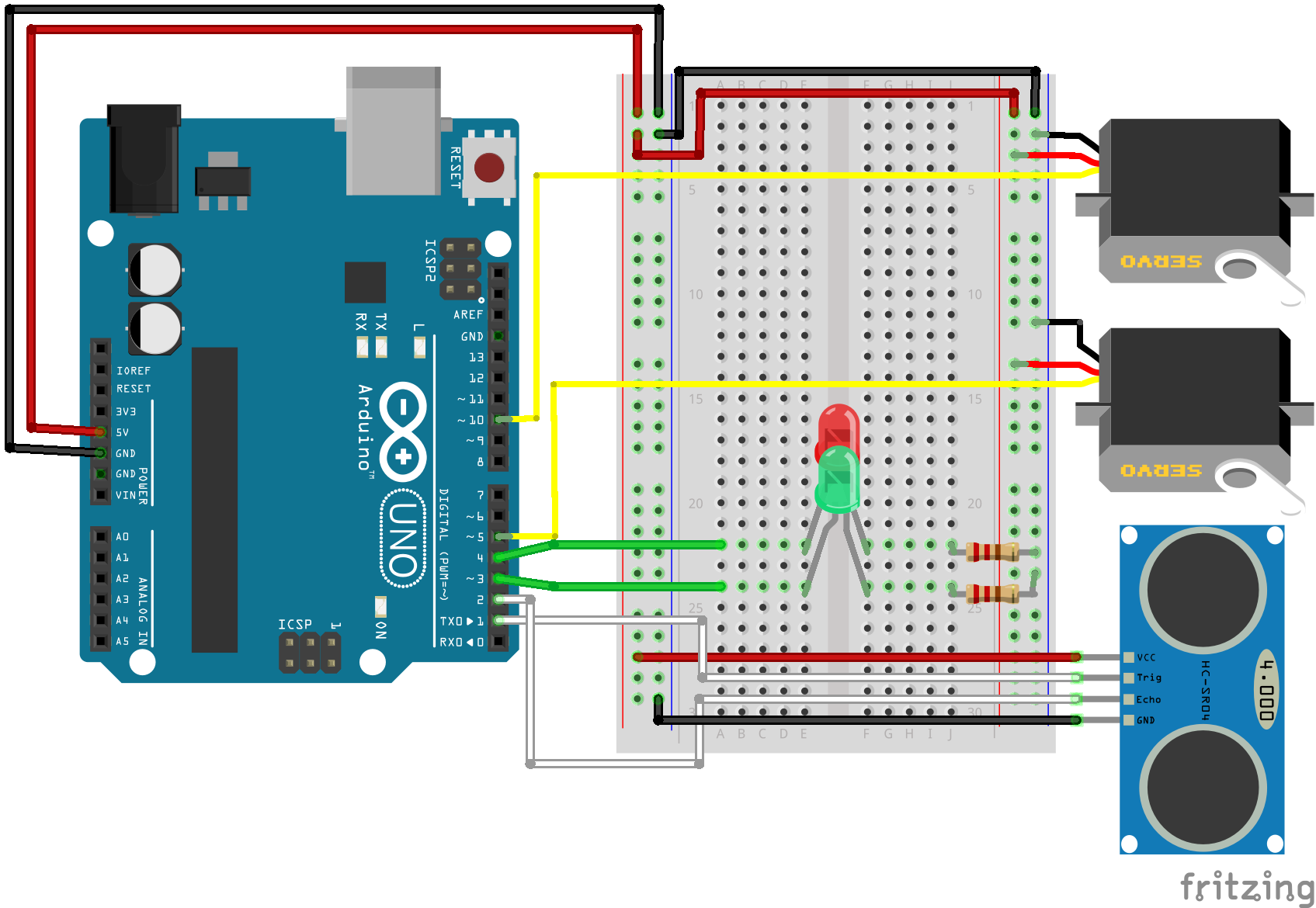

Fritzing diagram of how I hooked up all of the components. It doesn’t show the bars used for arms, but you’ll see how that works in the next gif. The red and green LEDs indicate whether the Arduino is switching between Networks, red meaning it’s switching, and green meaning movements are controlled by a Neural Network.

In terms of the Neural Network, I used 3 input nodes, 1 hidden layer of 10 nodes, and 2 output nodes. The input values are the current position of each servo, along with the current distance away from the object detected in the ultrasonic sensor. The output values decide whether the servo should increase or decrease its current angle (> or < 0.5). I used a sigmoid function (1 / (1 + e^(-x))) as my activation function. Each Neural Network controls one motion, with a genetic algorithm that replaces the least successful Network (2 best networks make a crossover/mutation child that replaces worst network). Fitness is distributed based on how far the robot moved while under control of each Neural Network.

Timelapse of the agent moving across the floor (sped up 3x). You can see that although it has a good start, it hiccups due to either a bad breed or mutation.

I enjoyed working on this project. It’s really one thing to watch a program learn in the real world as opposed to in the virtual world.

If you want to get the Arduino script and/or the .stl files for 3D printing the parts, you can get them here (.zip, 15 kb): https://drive.google.com/file/d/0Bz_0wgRmDpKqdGdGUS1YMk9oN1E/view?usp=sharing

Recent Comments